THE WEEKLY NEWSLETTER OF AIM. Monday, Apr 15, 2024 | Was this email forwarded to you? Sign up here By Amit Naik |

|

|

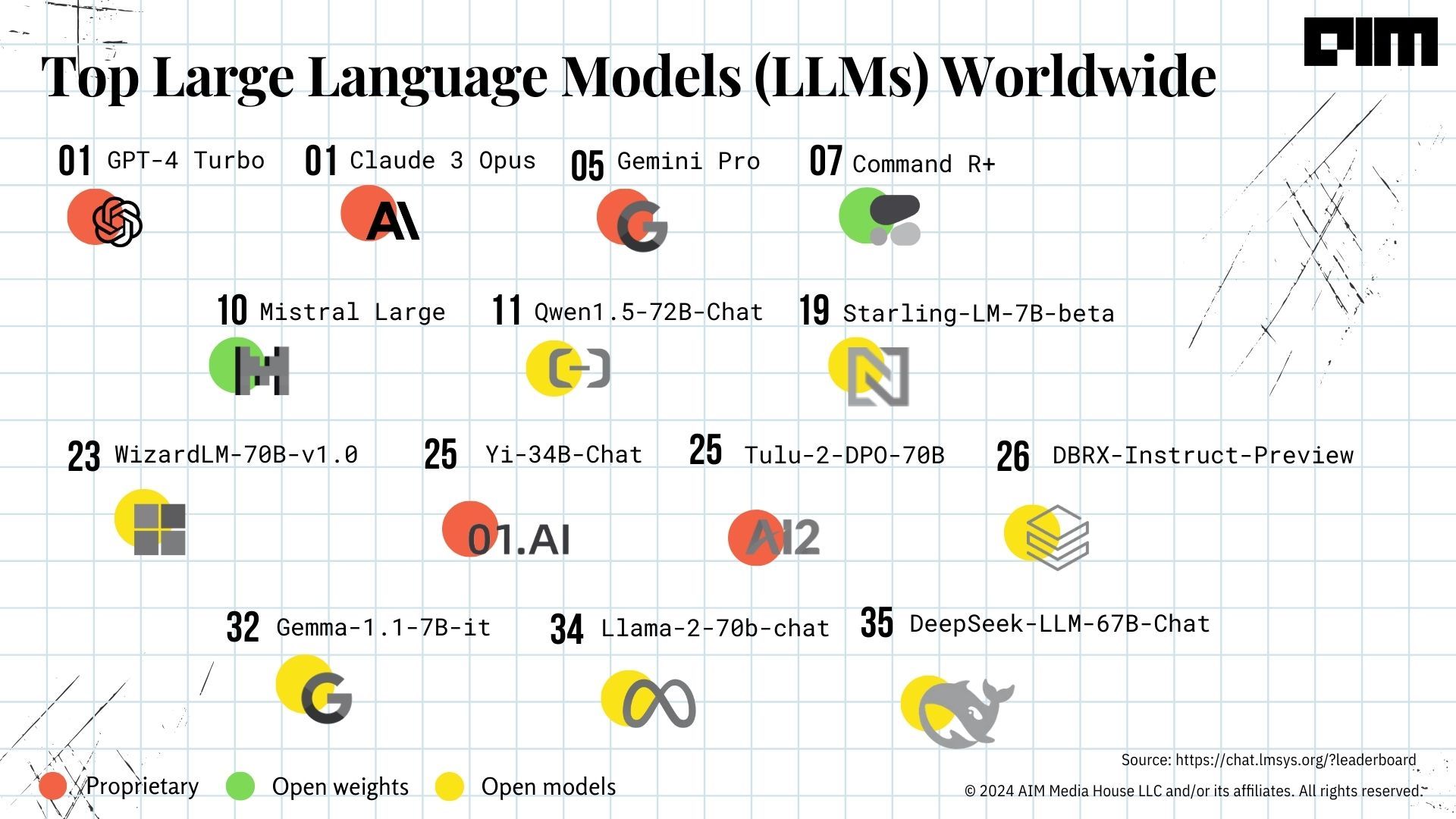

Among all the LLMs that made it to the leaderboard, Cohere’s Command R+ and Mistral Large clearly stand out, catching up in performance to more established, proprietary models, which is a significant step forward for open-source AI development, alongside setting the bar for Llama 3 and GPT-5 going forward. While the effectiveness of benchmarks like LMSys might not be accurate as they are likely inherently limited by human evaluation capabilities, the platform helps understand the current advancements in the LLM landscape at a global scale. |

|

Unsurprisingly, India’s open-source AI revolution has already started, and 20-year-old developers are leading it. Read the full story here. |

|

|

|

|

|

Hindi Mein LLM In the latest episode of Analytics Guru, AIM explores LLMs, detailing their function, training, and applications, and examines the future impact of AI technology on our daily interactions. |

|

AI Backbone Today, hundreds of companies are advancing generative AI by developing foundational models, providing infrastructure and sourcing talent alongside collectively driving rapid innovation in the field. Here are some of the notable AI companies: (See image below) |

|

Also, check out the list of top LMS platforms that are perfect for enterprises looking to enhance their AI capabilities |

|

Avi Wigderson, a prominent computer scientist, won the prestigious 2023 Turing Award for his transformative contributions to understanding randomness in computation and his leadership in theoretical computer science. |

|

In an exclusive interaction with AIM, Karthikeyan Viswanathan, the chief of DataSwitch, takes us through the journey of integrating data from various sources, and enhancing data-driven decision-making, alongside discussing the challenges posed by fragmented data systems that hinder business analytics. |

|

- Google recently introduced a new Arm-based processor, Axion, which offers a 30% improvement in performance compared to general-purpose Arm chips and a 50% increase over the current generation x86 chips made by Intel and AMD.

- Google also introduced the TPU v5p chip, configured to operate in large-scale pods containing up to 8,960 chips, providing double the raw performance of the previous generation of TPUs.

- Meta recently unveiled its next-generation AI chip, the Meta Training and Inference Accelerator (MTIA), which more than doubles previous solutions' compute and memory bandwidth, specifically enhancing the efficiency of ranking and recommendation models in Meta's apps and services.

- Intel's new Gaudi 3 AI accelerator chip, revealed at the Vision 2024 event, claimed a 50% faster performance on AI language models than NVIDIA’s H100.

|

|

|

|

|

|

|

Комментариев нет:

Отправить комментарий

Примечание. Отправлять комментарии могут только участники этого блога.