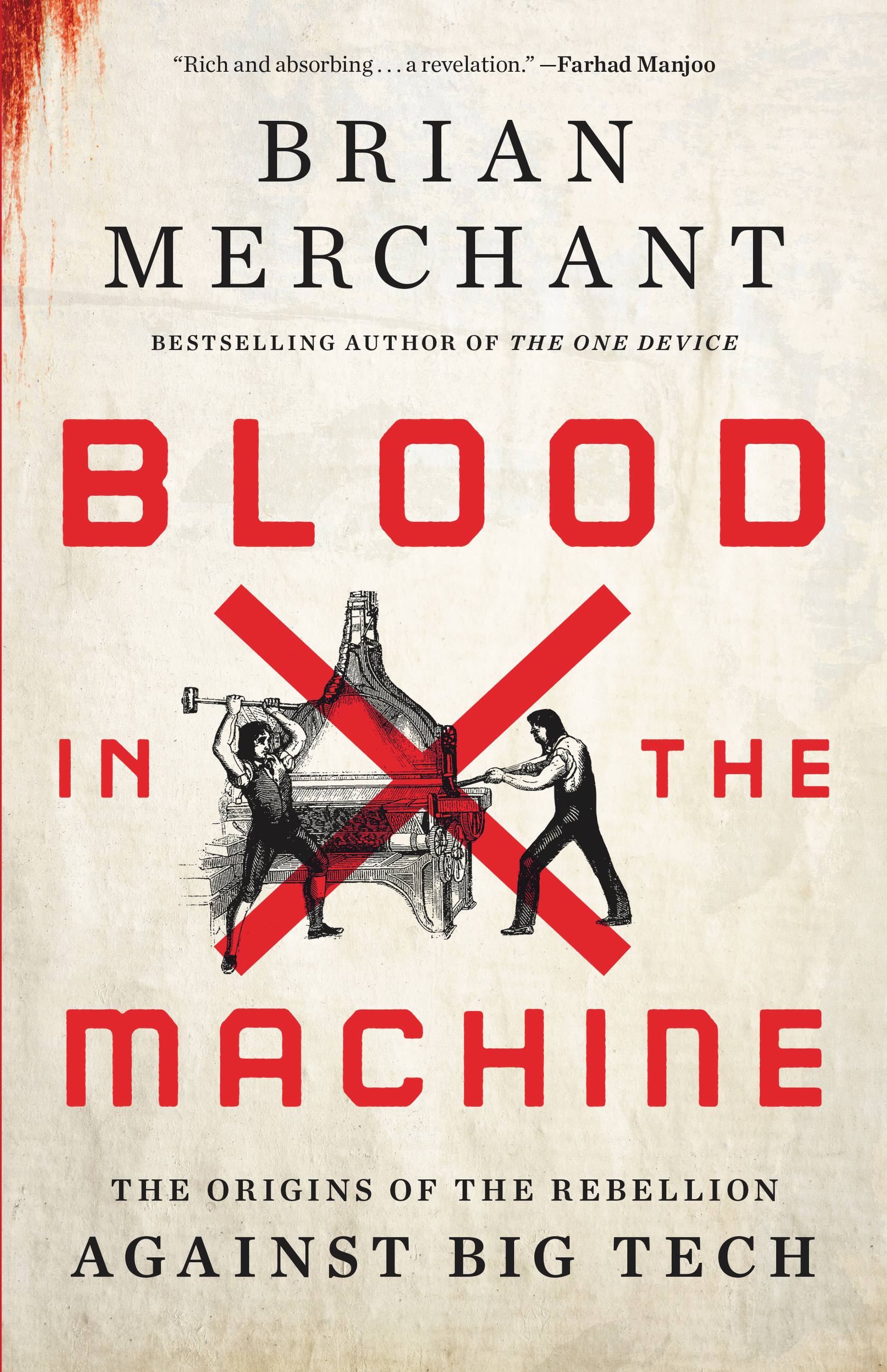

| | AI's copyright problem is fixable If 2023 taught us anything, it's that companies are embedding generative AI in all manner of tools and products. But hoped-for gains in workplace productivity sit uneasily against claims of copyright infringement by the artists whose images and texts and music have helped train the large language models that underpin GenAI tools. (So much so that some developers of popular AI products, including OpenAI, Google, Microsoft, and IBM, have promised to protect their users against copyright lawsuits—although Bloomberg's Brad Stone warns that "the protections offered are narrower than what's suggested by the PR.") Despite uncertainty about whether any specific generative AI usage actually constitutes a copyright violation, the problem must be addressed, both to ensure that creators are fairly compensated when their works are used and to clear the path for organizations that want to incorporate AI but are worried about the legal risks. (Of course, this isn’t the only issue deterring companies from deploying generative AI tools.) Luckily, as Mike Loukides and I argue in a recent article in Project Syndicate, we can solve the copyright problem right now using a technique called retrieval-augmented generation (RAG). Here's how companies are already putting RAG to work: [Developers] use retrieval-augmented generation (RAG) to allow an AI to "know about" content that isn't in its training data. If you need to generate text for a product catalog, you can upload your company's data and then send it to the AI model with the instructions: "Only use the data included with this prompt in the response." Though RAG was conceived as a way to use proprietary information without going through the labor- and computing-intensive process of training, it also incidentally creates a connection between the model's response and the documents from which the response was created. That means we now have provenance. . . If we publish a human programmer's currency-conversion software in a book, and our language model reproduces it in response to a question, we can attribute that to the original source and allocate royalties appropriately. The tools to fix the copyright issue are out there. As Mike and I conclude, "Tech companies no longer have an excuse for copyright unaccountability." + Mike and I shared a longer, more detailed version of our paper on O'Reilly Radar, complete with RAG-inspired ideas we're experimenting with at O'Reilly. Read it here. + And in case it wasn't apparent, O'Reilly believes that generative AI must be deployed in a fair and ethical way. That means compensating and giving credit to the experts publishing books, videos, courses, and other content with us when their work is used. You can learn more about O'Reilly's approach to generative AI in this open letter from our president, Laura Baldwin. + From Bloomberg Law: "AI's Billion-Dollar Copyright Battle Starts with a Font Designer." | | | | | We've never seen a technology adopted as fast as generative AI If you're interested in just how deeply generative AI has permeated organizations, Mike's latest O'Reilly report, Generative AI in the Enterprise , explores adoption in exhaustive detail. Analyzing results from nearly 3,000 O'Reilly customers, Mike examines everything from model market share to top use cases, skill gaps, concerns, and challenges. One remarkable finding: "Two-thirds (67%) of our survey respondents report that their companies are using generative AI." That's a huge rate of adoption, considering that ChatGPT is little more than a year old. As new technologies go, it's beyond compare, as Mike illustrates: "A year after the first web servers became available, how many companies had websites or were experimenting with building them? Certainly not two-thirds of them." Here's another surprise: "26% [of organizations] have been working with AI for under a year. But 18% already have applications in production." We're still in the early stages of generative AI, but businesses clearly believe in the promise of AI—to boost productivity, raise revenue, and more. And as Mike's reporting proves, they're making big moves so they don't get left behind. | | | | | An AI vibe check Ben Thompson has been holding a years-long conversation on AI with Daniel Gross (who cofounded Cue and led AI at Apple) and Nat Friedman (the former CEO of GitHub) at Stratechery. In the most recent installment, they turn their attention to the human component. The discussion is all over the place—they offer a "vibe check" on the industry, get into religion, and touch on less-esoteric topics like the hubbub at OpenAI—but it's all very interesting! Here's a sampling. Gross on spirituality: I think we're still in the 1994 equivalent of AI where it is very much a spiritual project, and AI is a little bit different from fintech or the Internet in the sense that it's a little that as you get closer to the horizon event it only becomes more intense, because obviously the penultimate goal with AI is to, if you believe them, summon a kind of god. . . .It very much feels like. . .what I imagine walking through Los Alamos must have felt like during the Manhattan Project, where there's a real searching for a sense of belonging and meaning and intellectual curiosity that's the driving force behind all of this, and that creates, I think, distortions in the market. And Friedman on what the future may hold: I think the central question of AI right now is where is the asymptote and how close are we to it? If it's near and not too much higher, then this is a transformative technology and it's a new technology platform and it's an incredible gold rush and it's something normal that we can understand and there's a lot of money to be made and applications to be built. It's sort of Browser Wars 2.0 or something like that between Microsoft and Google. If we're not near an asymptote if you know, my god, there is no asymptote or at least none that we can perceive, then it's hard to know what happens next, and we're probably going to have a very different civilization. One more—Gross on which AI is the best: We've now reached the point where the open source, the closed source models, they're all sort of good enough to the point where picking a favorite is actually a matter of aesthetic preference and made up reasons much more than a practical one. . . . . . .I think there's a view to which these aesthetic preference selections, "Claude feels better", "ChatGPT was mean to me, "Bard is nice", that sort of thing is going to matter a lot. And that's just the start. They also dive into NVIDIA's DGX Cloud, the "mixture of experts" technique Mistral used to build its high-quality open source model, Google's Gemini launch, what's happening with Siri, and more. Definitely worth reading in full. + Google software engineer and AI researcher François Chollet has been dissecting AI "intelligence" on X. Here's a compelling thread exploring the connection between intelligence and compression, and here's another on why current approaches to AI understanding can't scale. + Last month I was a guest on Erik Cederwall and Sachin Gandhi's podcast, Luminary. Our conversation focused on open source, but we also talked a little about my algorithmic rents project with Ilan Strauss, Mariana Mazzucato, and Rufus Rock. | | | | | Deeper reading: Blood in the Machine—The Origins of the Rebellion Against Big Tech  People often use the term "Luddite" to disparage those resistant to new technologies. But the actual Luddite movement of the early 1800s was far more nuanced—and it can help us make sense of our own interactions with Big Tech and AI today, says tech critic and Los Angeles Times columnist Brian Merchant in his recent book, Blood in the Machine . As Merchant explains, rather than being an antitechnology mob, the Luddites were organized workers protesting industrial technology that threatened their livelihoods. Here's how he describes the movement in an interview with Chris Taylor and Taylor Lorenz: When the Luddites sort of led their uprising, it was as much against the material conditions which were being degraded by those machines, by those entrepreneurs and those industrialists. It was also a resistance to being forced into this new mode of work into factorization, as they would call it, having to stand at their command. They had these lives where they had autonomy. They could run their own schedule, they could work at home, they could sing songs with their families. And this new work regime, which was basically sort of inaugurating the factory system for the first time, was incredibly oppressive. They saw it. Nobody liked it. Everybody hated it. And so when the Luddites registered this complaint, this protest, it was much larger, much broader than just the cloth workers, and that's why sort of the elites of the day and the crown issuing all these proclamations, rousing troops to occupy industrial towns and areas, and they had to crush this rebellion outright because it was so popular because people cheered the Luddites in the streets as they engaged in machine breaking. They were the Robin Hood of the day. Merchant is most interested in the way the Luddite rebellion can contextualize our current moment. And that's the book's real message, as Billy Perrigo observes in his review/interview in TIME: "If new technologies erode wages and increase wealth inequality, it's a result of a political choice by the owners of that technology, not a result of the inevitable and unstoppable march of progress." That's just as true now as it was in 1811. And like the Luddites of history, workers today may have to fight to ensure they're apportioned some of the benefits, as we saw during last year's strikes of writers and actors and autoworkers. As Merchant argues in TIME, "We can absolutely decide how we want technology to be used." Neo-Luddism is a means of making those demands. Merchant proudly claims the title of Luddite and suggests that "you should be one, too." (Really, as Wired's Gregory Barber notes in his review, "everyone is a Luddite now.") Here's a review from the New York Times, and another from the New Yorker . You can read an excerpt from Blood in the Machine in Fast Company and hear from Merchant in this interview with Sara Goudarzi at the Bulletin of Atomic Scientists and this interview with Sam Seder and Emma Vigeland on The Majority Report. + More from Merchant at the LA Times: "This Was the Year of AI. Next Year Is When You Should Worry About Your Job." | | | | | | —Tim O’Reilly and Peyton Joyce | | | |

Комментариев нет:

Отправить комментарий

Примечание. Отправлять комментарии могут только участники этого блога.